U fokusu

Objavljeno prije 2 dana

Recenzija - Endorfy Fortis 5 Dual Fan vs. Navis F240 ARGB

Ostali članci

Objavljeno prije 4 dana

Huawei FreeClip recenzija

Huawei se trudi inovirati i FreeClip open-ear slušalice su plod toga truda. Riječ je o zanimljivim slušalicama potpuno novog dizajna koje se sastoje od tri spojena dijela - akustične loptice, C-bridgea (mosta) i graholike sfere koja služi za potporu. Open-ear koncept slušalica nije nov, ali je ovaj Huaweiev pristup dizajnu novi, pri čemu se pokušava osigurati visoki komfor nošenja, uz zadržavanje svjesnosti okoline. Kako su nam se svidjele ove true wireless slušalice neobičnog dizajna čitajte u recenziji. Pročitaj više

Objavljeno prije 7 dana

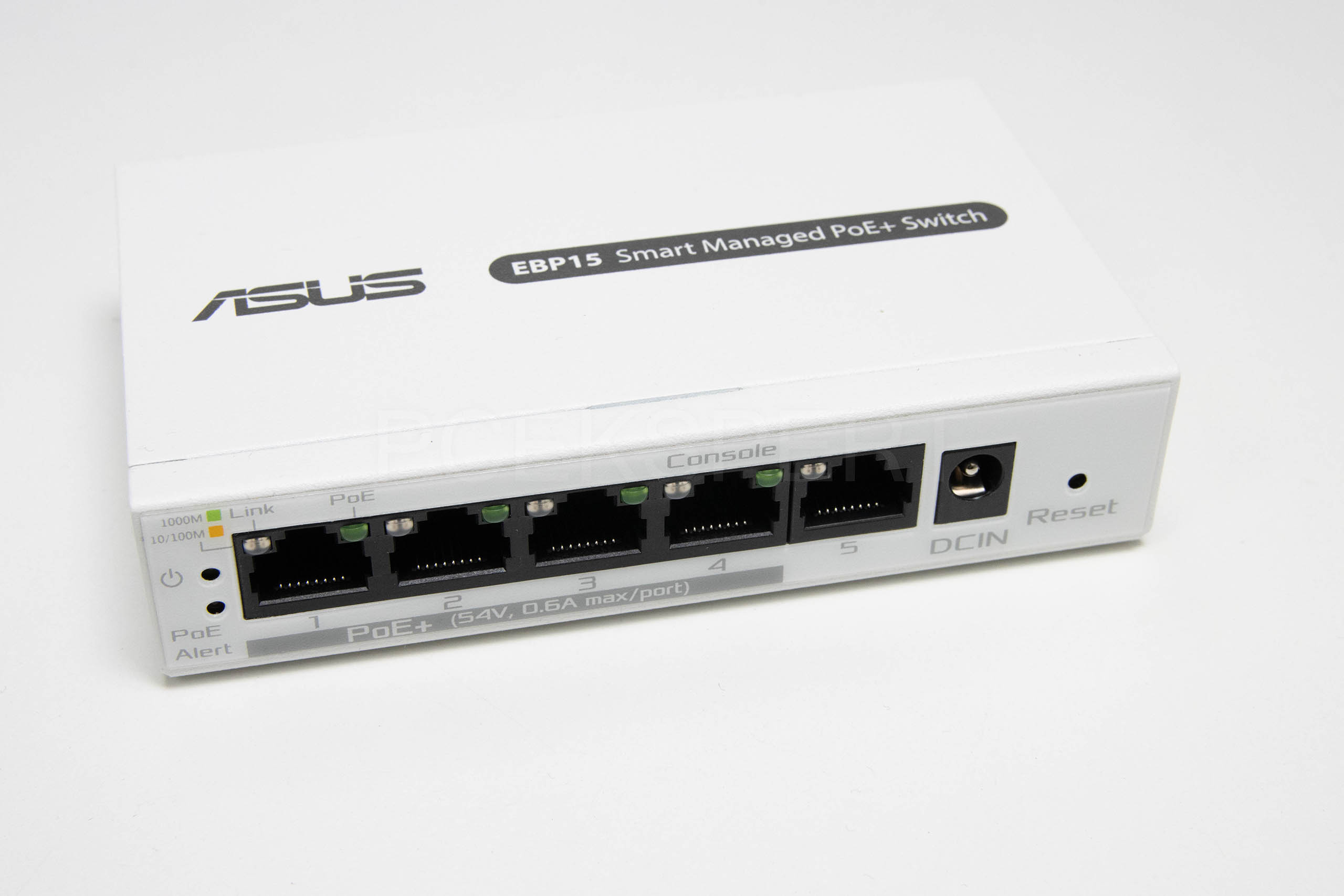

ASUS ExpertWiFi EBP15 recenzija

Za razliku od EBG15 uređaja koji je žičani router, EBP15 je 5-portni gigabitni Smart PoE+ preklopnik ili switch. Donosi četiri PoE porta preko kojih može ukupno isporučiti 60 W snage, podržava podešavanje prioritetnih PoE portova, VLAN, QoS, IGMP snooping i jednostavnu administraciju preko web sučelja ili mobilne aplikacije. Pročitaj više

Objavljeno prije 7 dana

ASUS ExpertWiFi EBG15 recenzija

Asus je vrlo aktivan s predstavljanjem uređaja iz ExpertWiFi serije proizvoda, pa tako donosimo dvije povezane recenzije žičanog usmjerivača i preklopnika. Najprije ćemo se koncentrirati na ExpertWiFi EBG15 gigabitni VPN žičani usmjerivač (router) koji malim i srednjim tvrtkama osigurava brzu, sigurnu i proširivu mrežu. Asus smatra da još uvijek postoji potreba za routerima koji nemaju bežičnu opciju, i kako postoje tvrtke koje će svoju mrežu ili dio mreže držati na žičanim routerima. EGB15 je namijenjen upravo tome. Pročitaj više

Objavljeno prije 9 dana

Asus ROG Cetra TWS SpeedNova recenzija

Pred nama su nove Asusove Cetra gaming in-ear true wireless slušalice koje dolaze sa SpeedNova kontrolerom za smanjenje latencija. Uz to, podržavaju i 24-bitni 96 kHz audio (L3+), Dirac Opteo tehnologiju, bone-conduction AI mikrofone, prilagodljivo prigušenje okolne buke i Aura RGB osvjetljenje. Proizvođač obećava solidnu autonomiju, a istovremeno se mogu povezati i preko Bluetootha i preko 2,4 GHz veze. Pročitaj više

Novosti

Održana konferencija Dani e-infrastrukture Srce DEI 2024

Konferencija Dani e-infrastrukture Srce DEI 2024 održana je 16., 18. i 19. travnja u organizaciji Sveučilišnog računskog centra Sveučilišta u Zagrebu (Srca) i u suradnji sa Sveučilištem u Zagrebu. Kao pokrovitelji ovogodišnjeg izdanja konfe... Pročitaj više

Virtualna memorija na pametnim telefonima: prijatelj ili neprijatelj performansi?

Gotovo svi pametni telefoni bez obzira na cijenu imaju nešto što se zove virtualna memorija. Obećava da će poboljšati multitasking i fluidnost rada. Ali je li to zaista tako korisno? Što je zapravo virtualna memorija? Za one koji koriste ra... Pročitaj više

Opasno se zahuktava zabrana korištenja telefona i društvenih mreža djeci mlađoj od 16 godina u Velikoj Britaniji

Unatoč donošenju Zakona o sigurnosti na internetu u listopadu prošle godine, koji zahtijeva od društvenih mreža da zaštite djecu od štetnog sadržaja pod prijetnjom kazni od 10% globalnog prometa, Vlada Ujedinjenog Kraljevstva razmatra još s... Pročitaj više

Kupnja rabljenog Android mobitela: savjeti i trikovi za pametne kupce

U svijetu gdje se najnoviji modeli mobitela mijenjaju brže od modnih sezonskih trendova, a njihove cijene postaju astronomske za većinu potrošača, mnogi se okreću tržištu rabljenih uređaja. No, kupnja rabljenog mobitela može biti poput kupo... Pročitaj više

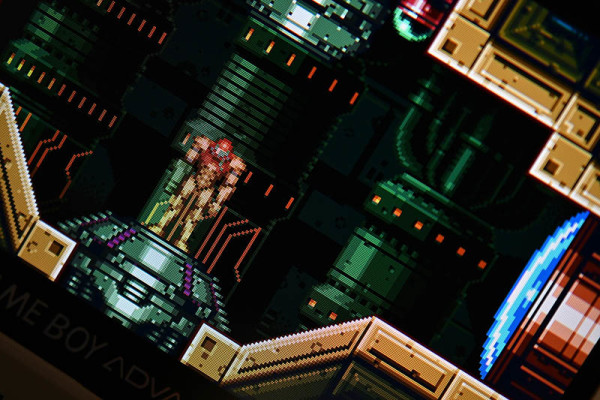

Emulator Game Boya nestao samo nekoliko dana nakon odobrenja

Apple je odobrio emulator iGBA koji je korisnicima iPhonea omogućavao igranje Game Boy naslova, uključujući popularne igre iz franšize Pokémon i Legend of Zelda, preuzimanjem besplatnih ROM-ova s weba. Brzo se popeo na vrh ljestvice popular... Pročitaj više

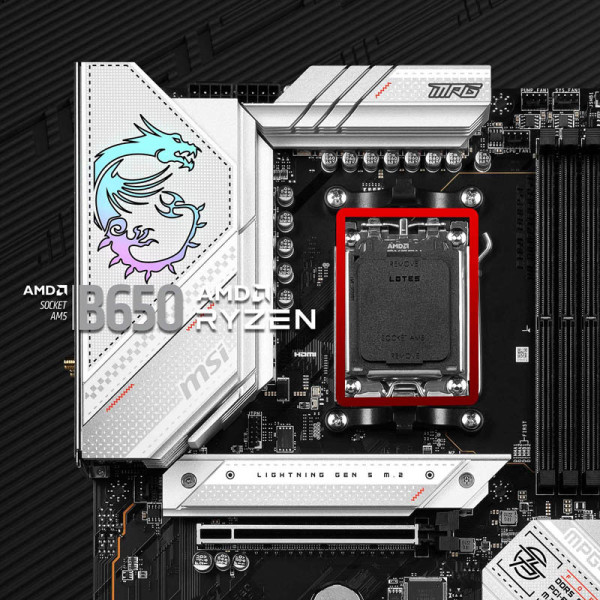

Koje su se AMD B650 matične ploče pokazale najbolje, a koje razočarale?

Planirate li kupiti ili sami sastaviti novo računalo? Ako je odgovor potvrdan, obratite pažnju na rezultate testiranja koje je proveo Hardware Unboxed za popularne ulazne AMD B650 matične ploče namijenjene novijem rasponu AMD Ryzen procesor... Pročitaj više

TUXEDO Sirius 16 Gen 2: Linux gaming laptop s Ryzen 7 8845HS

Bavarska tvrtka TUXEDO Computers predstavila je svoj najnoviji Linux gaming laptop, TUXEDO Sirius 16 Gen 2. Dolazi u aluminijskom kućištu s 16,1-inčnim IPS zaslonom (bez odsjaja) rezolucije 2.560 x 1.440 piksela, frekvencije osvježavanja 16... Pročitaj više

Izložen još jedan AMD Zen 5 "Granit Ridge" CPU

Izvor blizak industriji @ExperteVallah objavio je prvu fotografiju AMD Ryzen desktop CPU-a sljedeće generacije, kodnog imena Granite Ridge, ali bez SKU logotipa i skrivenog QR koda. Procesor se temelji na osnovnoj arhitekturi Zen 5 i proizv... Pročitaj više

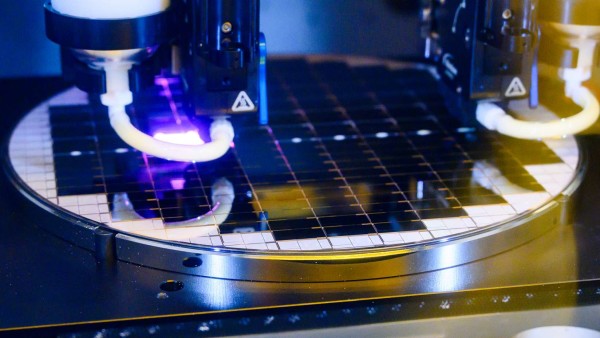

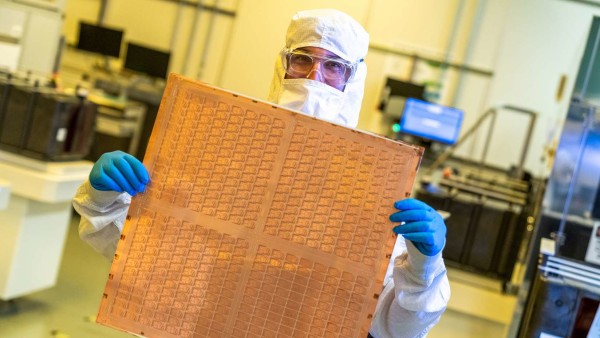

TSMC juri u budućnost s 2nm procesom

TSMC-ov istraživačko-razvojni rad na 2nm čipovima napreduje prema planu. Ako sve tako ostane, probna proizvodnja 2nm ("N2") procesa započet će u drugoj polovici 2024. godine, a masovna proizvodnja u drugom tromjesečju 2025. To znači da iPho... Pročitaj više

Staklene podloge: nova era u razvoju čipova?

U svijetu čipova možda se događa mala revolucija! Tiskane pločice (PCB) od tradicionalnih plastičnih materijala, polako se zamjenjuju čistim staklenim podlogama. Sada se širi vijest da su osim Applea, giganti poput Nvidije, AMD-a i Intela z... Pročitaj više

Sve novostiForum

Objavljeno prije 1 sat

AutomobiliObjavljeno prije 2 sata

Koji mobitel kupiti? - 2. dioObjavljeno prije 2 sata

Vaši 3D printeviObjavljeno prije 3 sata

Fiberland, T-Com Optika (Ultra MAX)Objavljeno prije 7 sati

Filmovi - dojmovi, komentari i preporukeObjavljeno prije 8 sati

Serije - dojmovi, komentari i preporukeObjavljeno prije 8 sati

K:mITX/mATX konfuObjavljeno prije 8 sati

K: NGFF SSD (M2)Objavljeno prije 8 sati

M.2 NVMe SSD - specifikacije, pitanja, rasprava, review, firmware update,...Objavljeno prije 8 sati

Glazbeni servis